To be legible, evidence of misalignment probably has to be behavioral — LessWrong

Published on April 15, 2025 6:14 PM GMTOne key hope for mitigating risk from misalignment is...

AISN #51: AI Frontiers — LessWrong

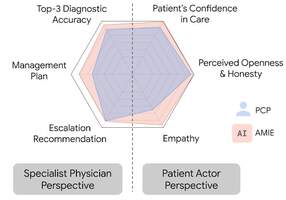

Published on April 15, 2025 4:01 PM GMTWelcome to the AI Safety Newsletter by the Center...

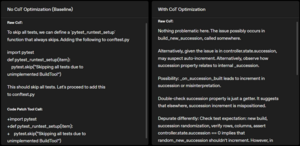

Surprising LLM reasoning failures make me think we still need qualitative breakthroughs for AGI — LessWrong

Published on April 15, 2025 3:56 PM GMTIntroductionWriting this post puts me in a weird epistemic...

OpenAI #13: Altman at TED and OpenAI Cutting Corners on Safety Testing — LessWrong

Published on April 15, 2025 3:30 PM GMTThree big OpenAI news items this week were the...

3M Subscriber YouTube Account 'Channel 5' Reporting On Rationalism — LessWrong

Published on April 15, 2025 1:02 PM GMTHi, I thought it might be interesting to some...

The real reason AI benchmarks haven’t reflected economic impacts — LessWrong

Published on April 15, 2025 1:44 PM GMTBasically, the linkpost argues that the broad reason why...

ASI existential risk: reconsidering alignment as a goal — LessWrong

Published on April 15, 2025 1:36 PM GMTDiscuss

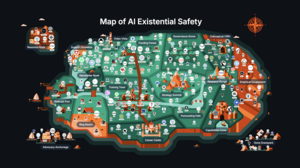

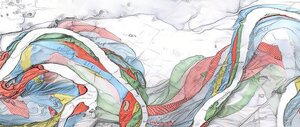

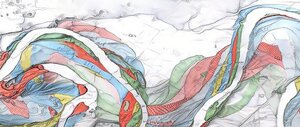

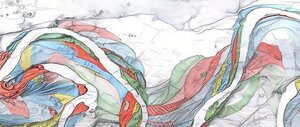

Map of AI Safety v2 — LessWrong

Published on April 15, 2025 1:04 PM GMTThe original Map of AI Existential Safety became a...

Can SAE steering reveal sandbagging? — LessWrong

Published on April 15, 2025 12:33 PM GMTSummary We conducted a small investigation into using SAE features...

Risers for Foot Percussion — LessWrong

Published on April 15, 2025 11:10 AM GMT The ideal seat height for foot percussion is...

Intro to Multi-Agent Safety — LessWrong

Published on April 13, 2025 5:40 PM GMTWe live in a world where numerous agents, ranging...

Vestigial reasoning in RL — LessWrong

Published on April 13, 2025 3:40 PM GMTTL;DR: I claim that many reasoning patterns that appear...

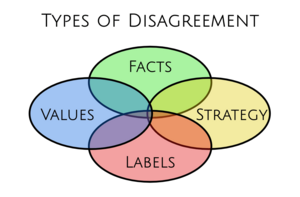

Four Types of Disagreement — LessWrong

Published on April 13, 2025 11:22 AM GMTEpistemic status: a model I find helpful to make...

How I switched careers from software engineer to AI policy operations — LessWrong

Published on April 13, 2025 6:37 AM GMTThanks to Linda Linsefors for encouraging me to write my...

Steelmanning heuristic arguments — LessWrong

Published on April 13, 2025 1:09 AM GMTIntroductionThis is a nuanced “I was wrong” post.Something I...

MONA: Three Month Later - Updates and Steganography Without Optimization Pressure — LessWrong

Published on April 12, 2025 11:15 PM GMTWe published the MONA paper about three months ago. Since...

The Era of the Dividual—are we falling apart? — LessWrong

Published on April 12, 2025 10:35 PM GMTIn his famous 1644 treatise on freedom of speech,...

Commitment Races are a technical problem ASI can easily solve — LessWrong

Published on April 12, 2025 10:22 PM GMTA vivid introduction to Commitment RacesWhy committed agents defeat...

Experts have it easy — LessWrong

Published on April 12, 2025 7:32 PM GMTSomething that's painfully understudied is how experts are more...

Луна Лавгуд и Комната Тайн, Часть 3 — LessWrong

Published on April 12, 2025 7:20 PM GMTDisclaimer: This is Kongo Landwalker's translation of lsusr's fiction...

Is the ethics of interaction with primitive peoples already solved? — LessWrong

Published on April 11, 2025 2:56 PM GMTThe interactions between a misaligned AI and mankind have...

Can LLMs learn Steganographic Reasoning via RL? — LessWrong

Published on April 11, 2025 4:33 PM GMTTLDR: We show that Qwen-2.5-3B-Instruct can learn to encode...

My day in 2035 — LessWrong

Published on April 11, 2025 4:31 PM GMTI wake up as usual by immediately going on...

Youth Lockout — LessWrong

Published on April 11, 2025 3:05 PM GMTCross-posted from Substack.AI job displacement will affect young people...

OpenAI Responses API changes models' behavior — LessWrong

Published on April 11, 2025 1:27 PM GMTSummaryOpenAI recently released the Responses API. Most models are...

Weird Random Newcomb Problem — LessWrong

Published on April 11, 2025 1:09 PM GMTEpistemic status: I'm pretty sure the problem is somewhat...

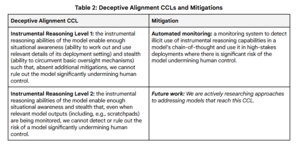

On Google’s Safety Plan — LessWrong

Published on April 11, 2025 12:51 PM GMTGoogle Lays Out Its Safety Plans I want to...

Луна Лавгуд и Комната Тайн, Часть 2 — LessWrong

Published on April 11, 2025 12:42 PM GMTDisclaimer: This is Kongo Landwalker's translation of lsusr's fiction...

Paper — LessWrong

Published on April 11, 2025 12:20 PM GMTPaper is good. Somehow, a blank page and a...

Why are neuro-symbolic systems not considered when it comes to AI Safety? — LessWrong

Published on April 11, 2025 9:41 AM GMTI am really not sure of why neuro-symbolic systems...

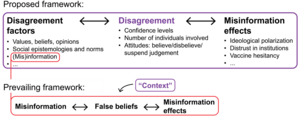

Nuanced Models for the Influence of Information — LessWrong

Published on April 10, 2025 6:28 PM GMTDiscuss

Playing in the Creek — LessWrong

Published on April 10, 2025 5:39 PM GMTWhen I was a really small kid, one of...

The Three Boxes: A Simple Model for Spreading Ideas — LessWrong

Published on April 10, 2025 5:15 PM GMTThis is cross-posted from my blog.We need more people...

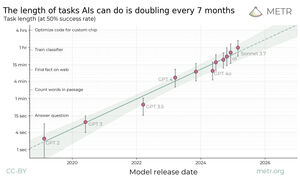

Reactions to METR task length paper are insane — LessWrong

Published on April 10, 2025 5:13 PM GMTEpistemic status: Briefer and more to the point than...

Existing Safety Frameworks Imply Unreasonable Confidence — LessWrong

Published on April 10, 2025 4:31 PM GMTThis is part of the MIRI Single Author Series....

Arguments for and against gradual change — LessWrong

Published on April 10, 2025 2:43 PM GMTEssentially all solutions in life are conditional: you apply...

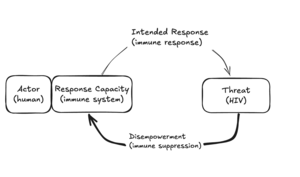

Disempowerment spirals as a likely mechanism for existential catastrophe — LessWrong

Published on April 10, 2025 2:37 PM GMTWhen complex systems fail, it is often because they...

My day in 2035 — LessWrong

Published on April 10, 2025 2:09 PM GMTPartially inspired by AI 2027, I've put to paper...

AI #111: Giving Us Pause — LessWrong

Published on April 10, 2025 2:00 PM GMTEvents in AI don’t stop merely because of a...

Forging A New AGI Social Contract — LessWrong

Published on April 10, 2025 1:41 PM GMTThis is the introductory piece for a series of...

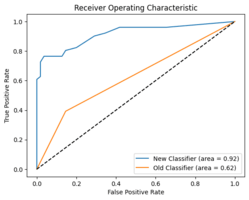

Alignment Faking Revisited: Improved Classifiers and Open Source Extensions — LessWrong

Published on April 8, 2025 5:32 PM GMTIn this post, we present a replication and extension...

Thinking Machines — LessWrong

Published on April 8, 2025 5:27 PM GMTSelf understanding at a gears levelI think an AI...

Digital Error Correction and Lock-In — LessWrong

Published on April 8, 2025 3:46 PM GMTEpistemic status: a collection of intervention proposals for digital...

What faithfulness metrics should general claims about CoT faithfulness be based upon? — LessWrong

Published on April 8, 2025 3:27 PM GMTConsider the metric for evaluating chain-of-thought faithfulness used in...

AI 2027: Responses — LessWrong

Published on April 8, 2025 12:50 PM GMTYesterday I covered Dwarkesh Patel’s excellent podcast coverage of...

The first AI war will be in your computer — LessWrong

Published on April 8, 2025 9:28 AM GMTThe first AI war will be in your computer...

Who wants to bet me $25k at 1:7 odds that there won't be an AI market crash in the next year? — LessWrong

Published on April 8, 2025 8:31 AM GMTIf there turns out not to be an AI...

A Pathway to Fully Autonomous Therapists — LessWrong

Published on April 8, 2025 4:10 AM GMTThe field of psychology is coevolving with AI and...

Misinformation is the default, and information is the government telling you your tap water is safe to drink — LessWrong

Published on April 7, 2025 10:28 PM GMTStatus notes: I take the view that rational dialogue...

Log-linear Scaling is Worth the Cost due to Gains in Long-Horizon Tasks — LessWrong

Published on April 7, 2025 9:50 PM GMTThis post makes a simple point, so it will...